"CSAM scanning" has never been implemented by Apple in any software release of theirs. As quoted in the article you're commenting on, Apple stated: "We have further decided to not move forward with our previously proposed CSAM detection tool for iCloud Photos." This is the latest news on this topic.Missed this news, tell me.

Is CSAM enabled or not in the latest iOS release?

Become a MacRumors Supporter for $50/year with no ads, ability to filter front page stories, and private forums.

Apple Abandons Controversial Plans to Detect Known CSAM in iCloud Photos

- Thread starter MacRumors

- Start date

- Sort by reaction score

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

A version of the Neural Hash model was found in iOS 14.3. The ML model was extracted and placed on GitHub. Devs even built websites of non-CSAM images that triggered the CSAM positive identification routines."CSAM scanning" has never been implemented by Apple in any software release of theirs. As quoted in the article you're commenting on, Apple stated: "We have further decided to not move forward with our previously proposed CSAM detection tool for iCloud Photos." This is the latest news on this topic.

The meme would be more effective if the picture on the right wasn’t a tv snow pattern, but for example a cat. But I doubt they would map to the same hash.

In the Github thread there are better matches with the same hash. Like two photos of totally different content but looking quite like normal photos.The meme would be more effective if the picture on the right wasn’t a tv snow pattern, but for example a cat. But I doubt they would map to the same hash.

Anyway, I took the time to go through this talk again: https://www.apple.com/105/media/us/...enix-security-symposium-tpl-us-2021_16x9.m3u8 Actually it's interesting, they have planned a lot more "safety guards" than I remember, especially also for this kind of attack:

They actually were planning on using another algorithm than NeuralHash (which would've run on the devices) on their servers to double-check any positive matches, making it unlikely for these "fake" pictures to be recognized as CSAM by the overall system. And of course, they also promised to use human reviewers at the end.

Still, I sleep better without any CSAM scanning going on. But at least, their implementation of it was considerably better than what Microsoft/Google etc. are doing.

Yes, this check was added after the initial uproar by the security community. Apple went through a couple of revisions to their CSAM scanning explainer page as weaknesses in their implementation were pointed out by researchers.In the Github thread there are better matches with the same hash. Like two photos of totally different content but looking quite like normal photos.

Anyway, I took the time to go through this talk again: https://www.apple.com/105/media/us/...enix-security-symposium-tpl-us-2021_16x9.m3u8 Actually it's interesting, they have planned a lot more "safety guards" than I remember, especially also for this kind of attack:

View attachment 2149182

They actually were planning on using another algorithm than NeuralHash (which would've run on the devices) on their servers to double-check any positive matches, making it unlikely for these "fake" pictures to be recognized as CSAM by the overall system. And of course, they also promised to use human reviewers at the end.

Still, I sleep better without any CSAM scanning going on. But at least, their implementation of it was considerably better than what Microsoft/Google etc. are doing.

"CSAM scanning" has never been implemented by Apple in any software release of theirs. As quoted in the article you're commenting on, Apple stated: "We have further decided to not move forward with our previously proposed CSAM detection tool for iCloud Photos." This is the latest news on this topic.

Right so I can upgrade from my holding pattern of iOS 14 to iOS 16 without any worries?

A version of the Neural Hash model was found in iOS 14.3. The ML model was extracted and placed on GitHub. Devs even built websites of non-CSAM images that triggered the CSAM positive identification routines.

That's amazing. I seem to have missed all the action. The CSAM was a real deal breaker for me. I've had enough of all this tech being used against people and nations, you know not just Apple.

It also pushes me to look back at SD camera of 12 years age and started using that again for a bit of fun same MP as iPhone but not as fast or with any of the processing but a bit of uncurrated, "oh I forgot about that picture..." fun, I even started to think about getting a new dedicate digital camera for the pocket, betrayal is never good, and once a brand or person doe sit you can never trust them again and i can same sentiment here, Apple screwed up big time on this one.

So overall user give latest version of iOS being CSAM free or at least inactive safety rating totally safe or as good as?

Reading some more and thinking could it be switched on at granular device side level remotely?

I know this is hypothetical hack but if the latest build have it in the codebase, albeit unused, what's to stop a remote exploit as such uncommenting the code and running in the background unbeknownst to the user and maybe even Apple?

Last edited:

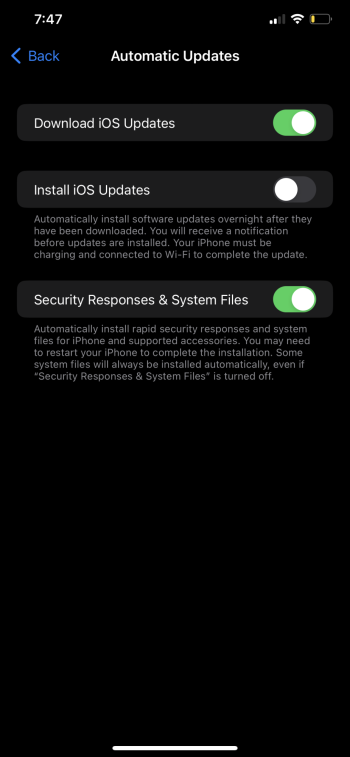

Just be aware that when you upgrade to ios 16, Apple has the ability download security updates without your consent.Right so I can upgrade from my holding pattern of iOS 14 to iOS 16 without any worries?

I stay current as I don't want my phone to be hacked by some malicious website.That's amazing. I seem to have missed all the action. The CSAM was a real deal breaker for me. I've had enough of all this tech being used against people and nations, you know not just Apple.

I starting using my DSLR, not because of CSAM, but because it takes pictures differently than what can be taken with a smartphone. I can still trust Apple - ymmv.It also pushes me to look back at SD camera of 12 years age and started using that again for a bit of fun same MP as iPhone but not as fast or with any of the processing but a bit of uncurrated, "oh I forgot about that picture..." fun, I even started to think about getting a new dedicate digital camera for the pocket, betrayal is never good, and once a brand or person doe sit you can never trust them again and i can same sentiment here, Apple screwed up big time on this one.

Nothing. If you are going to partake in outsmarting Apple (or Microsoft, or google) you're barking up wrong tree.So overall user give latest version of iOS being CSAM free or at least inactive safety rating totally safe or as good as?

Thing is reading some more now, could it be switched on a granular device sdie love remotely? I know tha's has think gin but if the latest build have it there, be unused, what's to stop pa remote hack as such uncommenting the code and running in the background unbeknownst to the user?

Just be aware that when you upgrade to ios 16, Apple has the ability download security updates without your consent.

Thanks and hmmm I did not know this… any good threads discussing this? 🤔

Check the main news feed. I'm sure it's in one of the articles. But it won't say much more than what I said.Thanks and hmmm I did not know this… any good threads discussing this? 🤔

If what I have read I have understood correctly then it may be possible to disable this form or auto update in settings.Check the main news feed. I'm sure it's in one of the articles. But it won't say much more than what I said.

Since I am nit using 16 I can’t check 🥸

Check the main news feed. I'm sure it's in one of the articles. But it won't say much more than what I said.

Found it, yea users can disable it, but it’s in by default. I think I can live with that.

Read the fine print.Found it, yea users can disable it, but it’s in by default. I think I can live with that.

Attachments

Indeed, so I think that was missed in the MR article, an innocent omission or did I not read the whole thing properly Hmmm…Read the fine print.

Also I’ve hit a wall and some web sites are not supporting this version of safari browser nor installing Firefox was a way around it. I innocently thought non Safari browsers Compatibility was generally not linked to iOS version but I guess I’m wrong.

Maybe a different browser try I should.

All browsers on iOS use the rendering engine built into the operating system. By not updating your iOS version you will therefore always lag behind in terms of rendering web content regardless of which app you‘re using. Also you‘re probably missing out on security fixes.Indeed, so I think that was missed in the MR article, an innocent omission or did I not read the whole thing properly Hmmm…

Also I’ve hit a wall and some web sites are not supporting this version of safari browser nor installing Firefox was a way around it. I innocently thought non Safari browsers Compatibility was generally not linked to iOS version but I guess I’m wrong.

Maybe a different browser try I should.

Your call obviously. Personally I think the threat from random hackers is bigger than the things discussed here in this topic, but everyone‘s threat model is different anyway so weigh up your options by yourself

The rendering engine is two+ years old. And maybe some websites are using new www standards. Either way it’s your call to upgrade or not.Indeed, so I think that was missed in the MR article, an innocent omission or did I not read the whole thing properly Hmmm…

Also I’ve hit a wall and some web sites are not supporting this version of safari browser nor installing Firefox was a way around it. I innocently thought non Safari browsers Compatibility was generally not linked to iOS version but I guess I’m wrong.

Maybe a different browser try I should.

I’ll have to upgrade but no longer trust Apple. It’s a very poor situation but the way the world is no surprises.All browsers on iOS use the rendering engine built into the operating system. By not updating your iOS version you will therefore always lag behind in terms of rendering web content regardless of which app you‘re using. Also you‘re probably missing out on security fixes.

Your call obviously. Personally I think the threat from random hackers is bigger than the things discussed here in this topic, but everyone‘s threat model is different anyway so weigh up your options by yourself

While the issues might seem different on a fundamental level Apple has given itself one if not potentially two backdoors.

Not great when trust is bust to lose control over access to a personal computing device.

Less I phone and more Borg Phone.

Thanks for updating on the very important nuance of the fine print.

Apple Has Begun Scanning Users Files EVEN WITH iCloud TURNED OFF"CSAM scanning" has never been implemented by Apple in any software release of theirs. As quoted in the article you're commenting on, Apple stated: "We have further decided to not move forward with our previously proposed CSAM detection tool for iCloud Photos." This is the latest news on this topic.

As pointed out earlier, mediaanalysisd isn't nefarious, and it isn't new. We already know MacOS scans our photos. That's how Apple provides features such as object and people recognition. That can't happen unless the photos are scanned.

Last edited:

Well I don’t use iCloud for photos but do for files and the convenience of having access for projects and work etc. Between devices or machines, and I am totally aware “it’s somebody else computer.” 🥸

I use to use lil snitch. Must try again.

However it’s not fully clear exactly what is going on. I can transfer file or photos from iPhone to the macOS without being connected to the net.

So what happens there?

When is the scanning event suppose to occur in transfer or after?

I would have assumed the scanning for people and objects transfers from the iPhone to iphotos, since that’s all done on device and the A chip is I thinkncustomised to perform this task well - So what’s new?

Or what more should I be worried about now!?

Apple is a company that lies about them caring about privacy, they do not ever deserve the benefit of the doubt. You stating that mediaanalysisd isn't nefarious does not make it so.As pointed out earlier, mediaanalysisd isn't nefarious, and it isn't new. We already know MacOS scans our photos. That's how Apple provides features such as object and people recognition. That can't happen unless the photos are scanned.

You’re the one simply stating an opinion. I provided a link to a detailed third party analysis of the feature.Apple is a company that lies about them caring about privacy, they do not ever deserve the benefit of the doubt. You stating that mediaanalysisd isn't nefarious does not make it so.

Register on MacRumors! This sidebar will go away, and you'll see fewer ads.